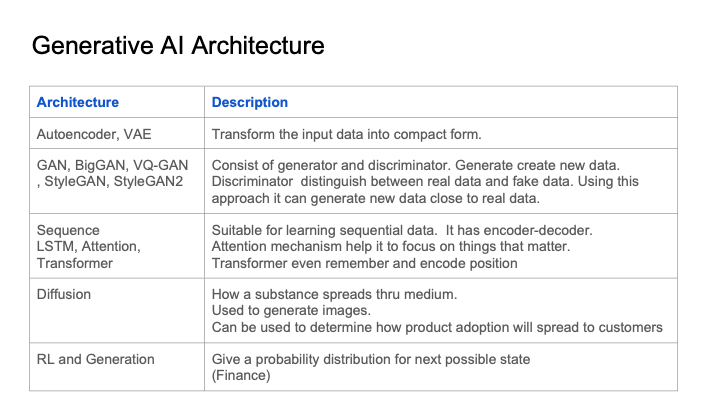

Generative Modeling architectures

Generative AI Modeling architecture - Applications of generative AI, technical architecture, risk, bias, ethical concern in generative AI, Foundation model are described here. In addition there are advance topics related to custom model training. There is also industry specific analysis.

TECH STACK |

ARCHITECTURE |

EVOLUTION |

Generative AI Modeling Architectures |

|

|

|

|

|

|

|

|

GAN Architecture |

|

A GAN model architecture is a combination of two neural networks that are trained in an adversarial manner. The first neural network, the generator, is responsible for creating new data. The second neural network, the discriminator, is responsible for distinguishing between real data and data created by the generator. The two networks are trained simultaneously, with the generator trying to fool the discriminator and the discriminator trying to correctly identify real and fake data.

|

|

|

Big GAN |

|

BigGAN is a generative adversarial network (GAN) that uses a modified training regime to improve the quality of generated images. The main difference between BigGAN and other GANs is that BigGAN uses a progressive growing technique, which gradually increases the size of the generator and discriminator networks. This allows BigGAN to generate more realistic images than other GANs.

|

|

|

Style GAN |

|

StyleGAN is a generative adversarial network (GAN) that uses a style-based generator architecture to generate high-quality images. The style-based generator architecture allows StyleGAN to generate images with a high level of detail and realism.

|

|

|

|

StyleGAN 2 addresses the shortcomings of StyleGAN, such as artifacts and instability. It uses Weight demodulation instead of AdaIN and it uses Residual connections instead of progressive growing:

|

|

|

VQ GAN |

|

VQ-GAN is a generative adversarial network (GAN) that uses a vector quantization (VQ) method to improve the quality of generated images. VQ is a technique for representing data as a discrete set of symbols. In the case of VQ-GAN, the data is represented as a discrete set of vectors. This allows VQ-GAN to generate images with a higher level of detail than other GANs.

|

|

|

Auto Encoder |

|

Variational autoencoder (VAE) is a generative model that learns to represent data by encoding it into a latent space. The latent space is a lower-dimensional space that captures the essential features of the data. The VAE can then be used to generate new data by sampling from the latent space and decoding it back to the original space.

|

|

|

Conditional Variational Auto Encoder |

|

A conditional variational autoencoder (CVAE) is a generative model that takes an additional input, called the condition, and generates data that is conditioned on that input. This is in contrast to a variational autoencoder (VAE), which does not take any additional inputs and generates data that is not conditioned on anything.

Because of this, CVAE can be used to generate data that is specific to a particular condition. For example, a CVAE could be used to generate images of monkey that are all wearing pajamas, or to generate text or css with particular formatting style.

|

|

|

Attention and Transformer |

|

An attention model is a neural network that learns to focus on specific parts of an input sequence. This is done by computing a weighted sum of the input sequence, where the weights are determined by the attention mechanism. The weighted sum is then used to generate the output sequence.

|

|

|

Attention to Transformer model |

|

A transformer modeling architecture is a neural network that uses attention mechanisms to learn long-range dependencies in the input sequence. The attention mechanism allows the model to focus on specific parts of the input sequence, which is important for tasks such as machine translation and text summarization.

|

|

|

Diffusion model |

|

Diffusion models are a type of generative model that adds noise to data gradually and then learns to reverse the process to generate new data. Diffusion models are often used for image generation, but they can also be used for other types of data, such as text and audio.

|

|

|

Schedule a workshop |

Email Text or CallTo book a workshop please send email from your business email address. Email to book workshop Email Address : workshop@dataknobs.comYou can also call us, send text or whats app at +1 4253411222 |

|

|

|

|

|

|